Abstract

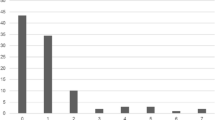

Previous research has made a beginning in addressing the importance of methodological differences in Web-based research. The present paper presents four studies investigating whether sample type, financial incentives, time when personal information is requested, table design, and method of obtaining informed consent influence dropout and sample characteristics(both demographics and measured attitudes). Undergraduates were less likely to drop out than nonstudents, and nonstudents offered a financial incentive were less likely to drop out than those offered no incentive. Complex tables, tables that were too wide, requests for personal information on the first page, and the imposing of additional informed consent procedures each provoked early dropout. As was expected, nonstudents and those presented with complex tables showed more measurement error and attitude differences. Asking for personal information and imposing additional consent procedures affected the demographic makeup, raising challenges to external validity.

Article PDF

Similar content being viewed by others

References

Davis, E. S., &Hantula, D. A. (2001). The effects of download delay on performance and end-user satisfaction in an Internet tutorial.Computers in Human Behavior,17, 249–268.

Dillman, D. A. (2000).Mail and Internet surveys: The tailored design method. New York: Wiley.

Dillman, D. A., &Bowker, D. K. (2001). The Web questionnaire challenge to survey methodologists. In U.-D. Reips & M. Bosnjak (Eds.),Dimensions of Internet science (pp. 159–178). Lengerich: Pabst Science.

Dillman, D. A., Tortora, R.D., Conradt, J., & Bowker, D. (1998, August).Influence of plain vs. fancy design on response rates for Web surveys. Paper presented at Joint Statistical Meetings, Dallas. Retrieved August 1, 2000 from http://survey.sesrc.wsu.edu/dillman/papers.htm.

Frick, A., Bächtiger, M. T., &Reips, U.-D. (1999). Financial incentives, personal information and drop-out in online studies. In U.-D. Reips, B. Batinic, W. Bandilla, M. Bosnjak, L. Gräf, K. Moser, & A. Werner (Eds.),Current Internet science: Trends, techniques, result [Aktuelle Online Forschung: Trends, Techniken, Ergebnisse]. Zurich: Online Press. Retrieved August 1, 2000 from http://dgof.de/tband99/inhalt.html.

Geistfeld, M. (2001). Reconciling cost—benefit analyses with the principle that safety matters more than money.New York University Law Review,76, 114–188.

Groves, R. M., Qaldini, R. B., &Couper, M. P. (1992). Understanding the decision to participate in a survey.Public Opinion Quarterly,56, 475–495.

Groves, R. M., Singer, R., &Corning, A. (2000). Leverage-saliency theory of survey participation.Public Opinion Quarterly,64, 299–308.

Kline, R. B. (1998).Principles and practice of structural equation modeling. New York: Guilford.

Krosnick, J. A. (1999). Survey research.Annual Review of Psychology,50, 537–567.

Levene, H. (1960). Robust tests for equality of variances. In I. Olkin (Ed.),Contributions to probability and statistics (pp. 278–292). Palo Alto, CA: Stanford University Press.

MacElroy, B. (2000). Variables influencing dropoutrates in Web-based surveys.Journal of Online Research. Retrieved December2, 2002 from http://www.quirks.com/articles/article.asp?arg_ArticleId=605.

Musch, J., &Reips, U.-D. (2000). A brief history of Web experimenting. In M. H. Birnbaum (Ed.),Psychological experiments on the Internet (pp. 61–87). San Diego: Academic Press.

Oakes, W. (1972). External validity and the use of real people as subjects.American Psychologist,27, 959–962.

O’Neil, D. (2001). Analysis of Internet users’ level of online privacy concerns.Social Science Computer Review,19, 17–31.

O’Neil, K. M., Patry, M. W., & Penrod, S. D. (in press). Exploring the effects of attitudes toward the death penalty on capital sentencing verdicts.Psychology, Public Policy, & Law.

O’Neil, K. M., &Penrod, S. D. (2001). Methodological variables in Web-based research that may affect results: Sample type, monetary incentives, and personal information.Behavior Research Methods, Instruments, & Computers,33, 226–233.

Reips, U.-D. (2000). The Web experiment method: Advantages, disadvantages and solutions. In M. H. Birnbaum (Ed.),Psychological experiments on the Internet (pp. 89–117). San Diego: Academic Press.

Reips, U.-D. (2002a). Internet-based psychological experimenting: Five dos and five don’ts.Social Science Computer Review,20, 241–249.

Reips, U.-D. (2002b). Standards for Internet-based experimenting.Experimental Psychology,49, 243–256.

Rogelberg, S. G., Fisher, G. G., Maynard, D. C., Hakel, M. D., &Horvath, M. (2001). Attitudes toward surveys: Development of a measure and its relationship to respondent behavior.Organizational Research Methods,4, 3–25.

Rush, M.C., Phillips, J.S., &Panek, P.E. (1978). Subject recruitment bias: The paid volunteer subject.Perceptual & Motor Skills,47, 443–449.

Sears, D. O. (1986). College sophomores in the laboratory: Influences of a narrow data base on social psychology’s view of human nature.Journal of Personality & Social Psychology,51, 515–530.

Singer, E., Mathiowetz, N. A., &Couper, M. P. (1993). The impact of privacy and confidentiality concerns on survey participation: The case of the 1990 U.S. Census.Public Opinion Quarterly,57, 465–482.

Smith, M. A., &Leigh, B. (1997). Virtual subjects: Using the Internet as an alternative source of subjects and research environment.Behavior Research Methods, Instruments, & Computers,29, 496–505.

Author information

Authors and Affiliations

Corresponding author

Additional information

This research constituted the first author’s doctoral dissertation at the University of Nebraska-Lincoln under the guidance of Steve Penrod, Brian Bornstein, Cal Garb in, John Flowers, and Bob Schopp.

Rights and permissions

About this article

Cite this article

O’Neil, K.M., Penrod, S.D. & Bornstein, B.H. Web-based research: Methodological variables’ effects on dropout and sample characteristics. Behavior Research Methods, Instruments, & Computers 35, 217–226 (2003). https://doi.org/10.3758/BF03202544

Received:

Accepted:

Issue Date:

DOI: https://doi.org/10.3758/BF03202544