Abstract

Purpose

To test the impact of method of administration (MOA) on the measurement characteristics of items developed in the Patient-Reported Outcomes Measurement Information System (PROMIS).

Methods

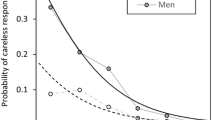

Two non-overlapping parallel 8-item forms from each of three PROMIS domains (physical function, fatigue, and depression) were completed by 923 adults (age 18–89) with chronic obstructive pulmonary disease, depression, or rheumatoid arthritis. In a randomized cross-over design, subjects answered one form by interactive voice response (IVR) technology, paper questionnaire (PQ), personal digital assistant (PDA), or personal computer (PC) on the Internet, and a second form by PC, in the same administration. Structural invariance, equivalence of item responses, and measurement precision were evaluated using confirmatory factor analysis and item response theory methods.

Results

Multigroup confirmatory factor analysis supported equivalence of factor structure across MOA. Analyses by item response theory found no differences in item location parameters and strongly supported the equivalence of scores across MOA.

Conclusions

We found no statistically or clinically significant differences in score levels in IVR, PQ, or PDA administration as compared to PC. Availability of large item response theory-calibrated PROMIS item banks allowed for innovations in study design and analysis.

Similar content being viewed by others

Abbreviations

- CAT:

-

Computerized adaptive testing

- COPD:

-

Chronic obstructive pulmonary disease

- DEP:

-

Depression

- FAT:

-

Fatigue

- IRT:

-

Item response theory

- IVR:

-

Interactive voice response

- MOA:

-

Method of administration

- PC:

-

Personal computer

- PDA:

-

Personal digital assistant

- PF:

-

Physical functioning

- PQ:

-

Paper questionnaire

- NLMIXED:

-

SAS procedure for estimating mixed models

- PRO:

-

Patient-reported outcomes

- PROMIS:

-

Patient-Reported Outcomes Measurement Information System

- WLSMV:

-

Weighted least squares with mean and variance adjustment

References

Gwaltney, C. J., Shields, A. L., & Shiffman, S. (2008). Equivalence of electronic and paper-and-pencil administration of patient-reported outcome measures: A meta-analytic review. Value Health, 11(2), 322–333.

Raat, H., Mangunkusumo, R. T., Landgraf, J. M., et al. (2007). Feasibility, reliability, and validity of adolescent health status measurement by the Child Health Questionnaire Child Form (CHQ-CF): Internet administration compared with the standard paper version. Quality of Life Research, 16(4), 675–685.

Yu, S. C. (2007). Comparison of Internet-based and paper-based questionnaires in Taiwan using multisample invariance approach. CyberPsychology & Behavior, 10(4), 501–507.

Duncan, P., Reker, D., Kwon, S., et al. (2005). Measuring stroke impact with the Stroke Impact Scale: Telephone versus mail administration in veterans with stroke. Medical Care, 43(5), 507–515.

Hepner, K. A., Brown, J. A., & Hays, R. D. (2005). Comparison of mail and telephone in assessing patient experiences in receiving care from medical group practices. Evaluation and the Health Professions, 28(4), 377–389.

de Vries, H., Elliott, M. N., Hepner, K. A., et al. (2005). Equivalence of mail and telephone responses to the CAHPS Hospital Survey. Health Services Research, 40(6 Pt 2), 2120–2139.

Powers, J. R., Mishra, G., & Young, A. F. (2005). Differences in mail and telephone responses to self-rated health: Use of multiple imputation in correcting for response bias. Australian and New Zealand Journal of Public Health, 29(2), 149–154.

Beebe, T. J., McRae, J. A., Harrison, P. A., et al. (2005). Mail surveys resulted in more reports of substance use than telephone surveys. Journal of Clinical Epidemiology, 58(4), 421–424.

Kraus, L., & Augustin, R. (2001). Measuring alcohol consumption and alcohol-related problems: Comparison of responses from self-administered questionnaires and telephone interviews. Addiction, 96(3), 459–471.

McHorney, C. A., Kosinski, M., & Ware, J. E, Jr. (1994). Comparisons of the costs and quality of norms for the SF-36 health survey collected by mail versus telephone interview: Results from a national survey. Medical Care, 32(6), 551–567.

Hanmer, J., Hays, R. D., & Fryback, D. G. (2007). Mode of administration is important in US national estimates of health-related quality of life. Medical Care, 45(12), 1171–1179.

Hays, R. D., Kim, S., Spritzer, K. L., et al. (2009). Effects of mode and order of administration on generic health-related quality of life scores. Value Health, 12(6), 1035–1039.

Agel, J., Rockwood, T., Mundt, J. C., et al. (2001). Comparison of interactive voice response and written self-administered patient surveys for clinical research. Orthopedics, 24(12), 1155–1157.

Dunn, J. A., Arakawa, R., Greist, J. H., & Clayton, A. H. (2007). Assessing the onset of antidepressant-induced sexual dysfunction using interactive voice response technology. Journal of Clinical Psychiatry, 68(4), 525–532.

Rush, A. J., Bernstein, I. H., Trivedi, M. H., et al. (2006). An evaluation of the quick inventory of depressive symptomatology and the hamilton rating scale for depression: A sequenced treatment alternatives to relieve depression trial report. Biological Psychiatry, 59(6), 493–501.

Cella, D., Yount, S., Rothrock, N., et al. (2007). The Patient-Reported Outcomes Measurement Information System (PROMIS): Progress of an NIH Roadmap cooperative group during its first two years. Medical Care, 45(5 Suppl 1), S3–S11.

Broderick, J. E., Schwartz, J. E., Vikingstad, G., et al. (2008). The accuracy of pain and fatigue items across different reporting periods. Pain, 139(1), 146–157.

Broderick, J. E., Schneider, S., Schwartz, J. E., & Stone, A. A. (2010). Interference with activities due to pain and fatigue: Accuracy of ratings across different reporting periods. Quality of Life Research, 19(8), 1163–1170.

Schneider, S., Stone, A. A., Schwartz, J. E., & Broderick, J. E. (2011). Peak and end effects in patients’ daily recall of pain and fatigue: A within-subjects analysis. J Pain, 12(2), 228–235.

Ware, J. E, Jr, Kosinski, M., Bayliss, M. S., et al. (1995). Comparison of methods for the scoring and statistical analysis of SF-36 health profile and summary measures: Summary of results from the Medical Outcomes Study. Medical Care, 33(4 Suppl), AS264–AS279.

Cella, D., Riley, W., Stone, A., et al. (2010). The Patient-Reported Outcomes Measurement Information System (PROMIS) developed and tested its first wave of adult self-reported health outcome item banks: 2005–2008. Journal of Clinical Epidemiology, 63(11), 1179–1194.

Ware, J. E, Jr, Snow, K. K., Kosinski, M., & Gandek, B. (1993). SF-36 health survey. Manual and interpretation guide. Boston: The Health institute, New England Medical Center.

Hambleton, R. K., & Jones, R. W. (1993). An NCME Instructional Module on the comparison of classical test theory and item response theory and their applications to test development. Educational Measurement: Issues and Practice, 12(3), 38–47.

van der Linden, W. J., & Hambleton, R. K. (1997). Handbook of modern item response theory. New York: Springer.

Reeve, B. B., Hays, R. D., Bjorner, J. B., et al. (2007). Psychometric evaluation and calibration of health-related quality of life item banks: Plans for the Patient-Reported Outcomes Measurement Information System (PROMIS). Medical Care, 45(5 Suppl 1), S22–S31.

Kolen, M. L., & Brennan, R. L. (2004). Test equating, scaling, and linking: Methods and practices. New York: Springer.

Chew, L. D., Bradley, K. A., & Boyko, E. J. (2004). Brief questions to identify patients with inadequate health literacy. Family Medicine, 36, 588–594.

Muthen, B. O., & Muthen, L. (2007). Mplus user’s guide (5th ed.). Los Angeles: Muthén & Muthén.

Hochberg, Y. (1988). A sharper Bonferroni procedure for multiple tests of significance. Biometrika, 75, 800–803.

Cohen, J. (1988). Statistical power for the behavioral sciences. Hillsdale NJ: Erlbaum.

Coons, S. J., Gwaltney, C. J., Hays, R. D., et al. (2009). Recommendations on evidence needed to support measurement equivalence between electronic and paper-based patient-reported outcome (PRO) measures: ISPOR ePRO Good Research Practices Task Force report. Value Health, 12(4), 419–429.

Dillman, D. A., Phelps, G., Tortora, R., et al. (2009). Response rate and measurement differences in mixed-mode surveys using mail, telephone, interactive voice response (IVR) and the Internet. Social Science Research, 38, 1–18.

Acknowledgments

The Patient-Reported Outcomes Measurement Information System (PROMIS) is a National Institutes of Health (NIH) Roadmap initiative to develop a computerized system measuring patient-reported outcomes in respondents with a wide range of chronic diseases and demographic characteristics. PROMIS was funded by cooperative agreements to a Statistical Coordinating Center (Northwestern University PI: David Cella, PhD, U01AR52177) and six Primary Research Sites (Duke University, PI: Kevin Weinfurt, PhD, U01AR52186; University of North Carolina, PI: Darren DeWalt, MD, MPH, U01AR52181; University of Pittsburgh, PI: Paul A. Pilkonis, PhD, U01AR52155; Stanford University, PI: James Fries, MD, U01AR52158; Stony Brook University, PI: Arthur Stone, PhD, U01AR52170; and University of Washington, PI: Dagmar Amtmann, PhD, U01AR52171). NIH Science Officers on this project are Deborah Ader, Ph.D., Susan Czajkowski, PhD, Lawrence Fine, MD, DrPH, Louis Quatrano, PhD, Bryce Reeve, PhD, William Riley, PhD, and Susana Serrate-Sztein, PhD. This manuscript was reviewed by the PROMIS Publications Subcommittee prior to external peer review. The authors would like to thank two anonymous PROMIS reviewers and two journal reviewers for comments on a previous version of this manuscript. See the web site at www.nihpromis.org for additional information on the PROMIS cooperative group.

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

The standard graded response IRT model can be formulated:

where θ j , is the latent health of person j: (here: physical functioning, fatigue, or depression), α i is the discrimination parameter for item i, λ i is the location parameter for item i, and τ ic is the item category parameter. An extended graded response model can be formulated in the following way:

where α o , λ o represents the potential effect of item order (being administered in the second part of the form as opposed to the first) on item discrimination and location parameters. α p , λ p represents the potential effect of IVR phone administration (as opposed to Internet administration). α q , λ q represents the potential effect of paper & pencil questionnaire administration (as opposed to Internet administration).

The model was estimated using SAS proc MLMIXED. The item parameters α i , λ i , and τ ic were initially treated as known constants and fixed to the values estimated in the PROMIS item bank development calibrations. In additional analyses, α i , λ i , and τ ic were estimated for each item using the current sample. The mean and standard deviation of θ was estimated separately for each diagnostic group.

Rights and permissions

About this article

Cite this article

Bjorner, J.B., Rose, M., Gandek, B. et al. Difference in method of administration did not significantly impact item response: an IRT-based analysis from the Patient-Reported Outcomes Measurement Information System (PROMIS) initiative. Qual Life Res 23, 217–227 (2014). https://doi.org/10.1007/s11136-013-0451-4

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11136-013-0451-4